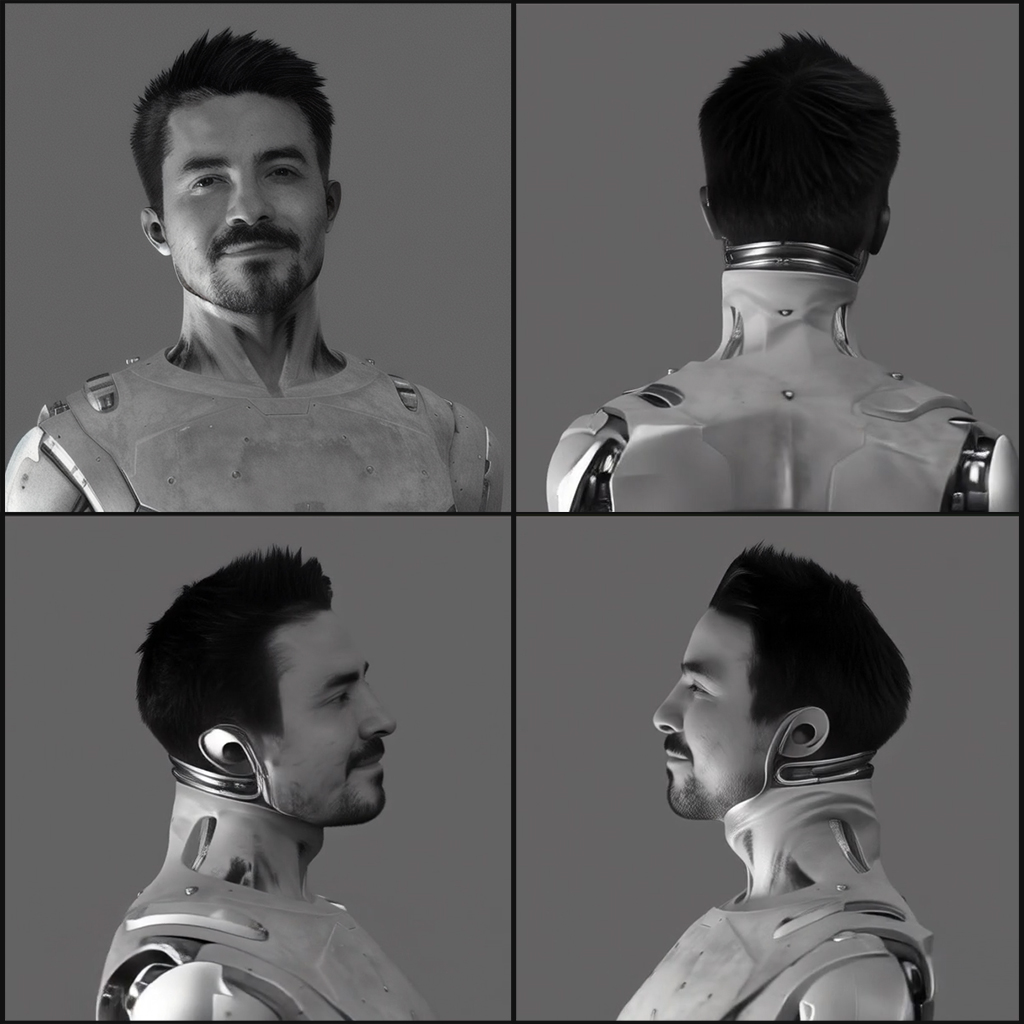

These are some early experiments with generating different perspectives from a single-view or image of a “character. Specifically we will be generating a turntable video that rotate the character in place.

This has the potential for multiple applications when it comes to character development in conventional VFX workflows or using images to produce consistent character generation.

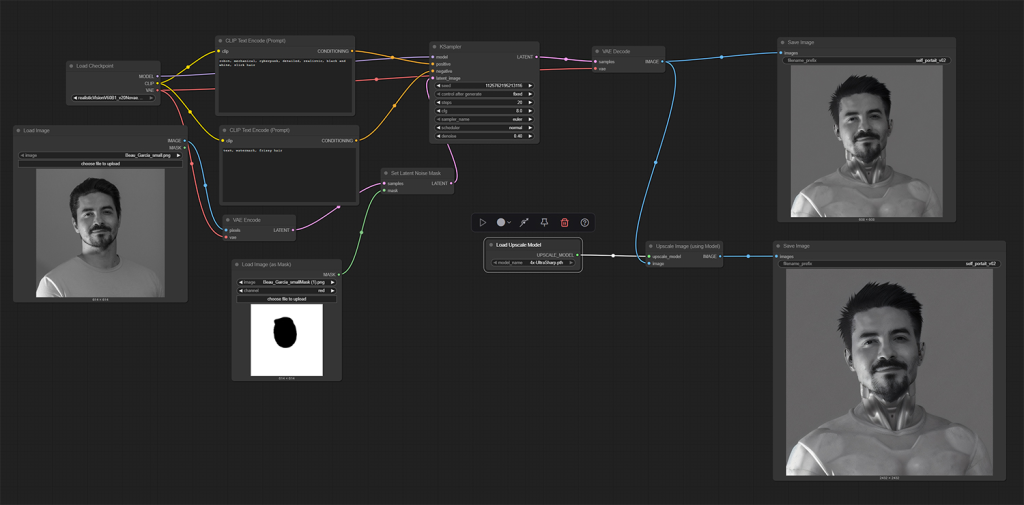

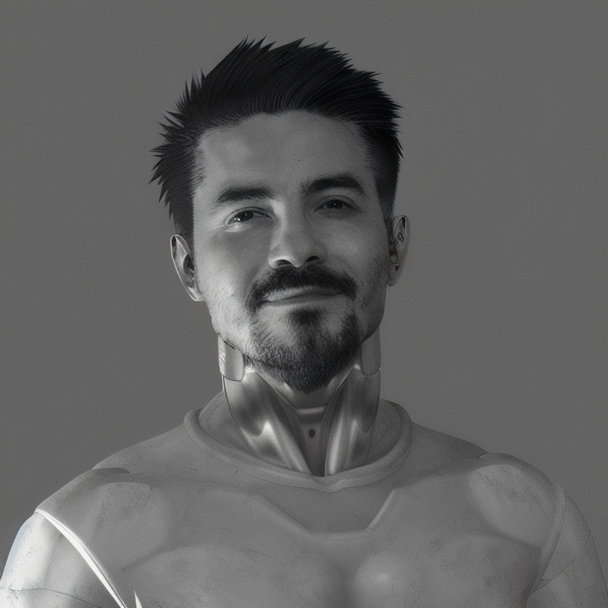

I will be experimenting with an old profile photo I had readily available. Below is a simple Comfy workflow that allows me to augment the image by restyling the body into a more sci-fi look. The two variations along with the original will be used in my testing.

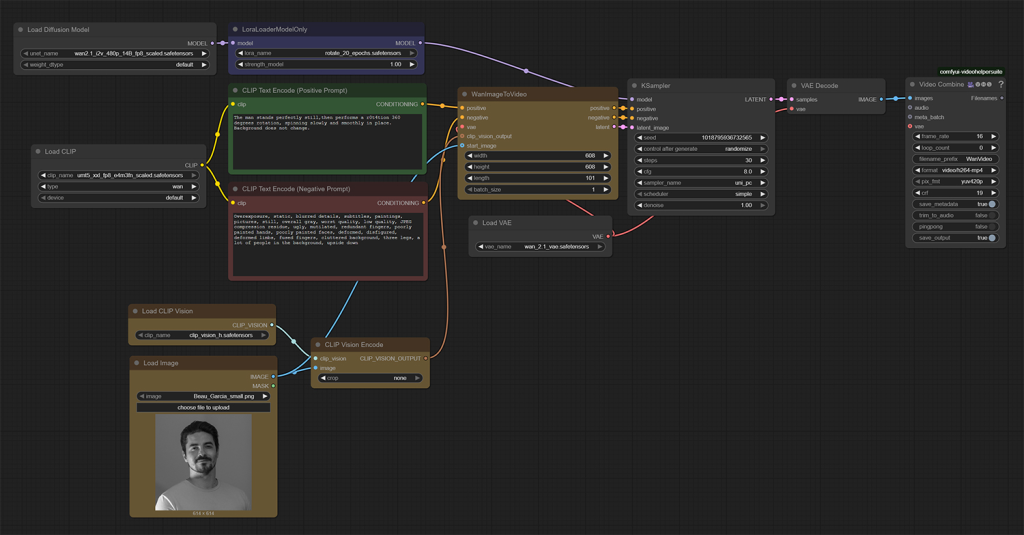

This workflow will be relying on Comfy UI, along with the following models and custom nodes:

- Diffusion Model: wan2.1_i2v_480p_14B_fp8_scaled.safetensors

- CLIP: umt5_xxl_fp8_e4m3fn_scaled.safetensors

- CLIP Vision: clip_vision_h.safetensors

- Lora: rotate_20_epochs.safetensors

- Custom Nodes: ComfyUI-VideoHelperSuite

Workflow

- Input the initial image, which will be used as both the start frame and it will also be used as reference image via CLIP Vision Encode node. This will attempt use the initial frame as a look frame for each generated frame.

- Below is the result when using variation B, Its impressive that this can be achieved with a single image as reference. The characters face, in this case myself. Maintains a fairly high level of resemblance considering the minimum input data that is required. Its far from perfect, but impressive granted ive used a single input image.

- Each perspective has some similarities, But you can clearly see the inconsistencies.

- There are more advanced workflows for maintain character consistency such as training a custom lora and using multiple reference images to help guide the generation.

- The below two versions are using the other two input images, both are less successful in terms facial consistency.